2026: AI Finally Has a Price

Foralongtime,AIlabsweretreatedlikeexpensiveR&Dprojectsthatmightneverjustifytheirburn.January2026looksdifferent.MiniMaxlistedinHongKong,raisedabout620milliondollars,anditssharesdoubledonthefirsttradingday.Thatisthestartingpointforhowthisyearfeels.AIisnotjustademoanymore.Itisturningintosomethingmarketsandinstitutionsplanaround.

For a long time, AI labs were treated like expensive R&D projects that might never justify their burn. January 2026 looks different. MiniMax listed in Hong Kong, raised about 620 million dollars, and its shares doubled on the first trading day. Zhipu (ZAI) came to the same exchange at the end of December, selling roughly 560 million dollars of stock and setting up a second large China model lab as a public company. That is the starting point for how this year feels. AI is not just a demo anymore. It is turning into something markets and institutions plan around. In this piece i’m breaking down sometrends that are arising in the AI world for 2026.

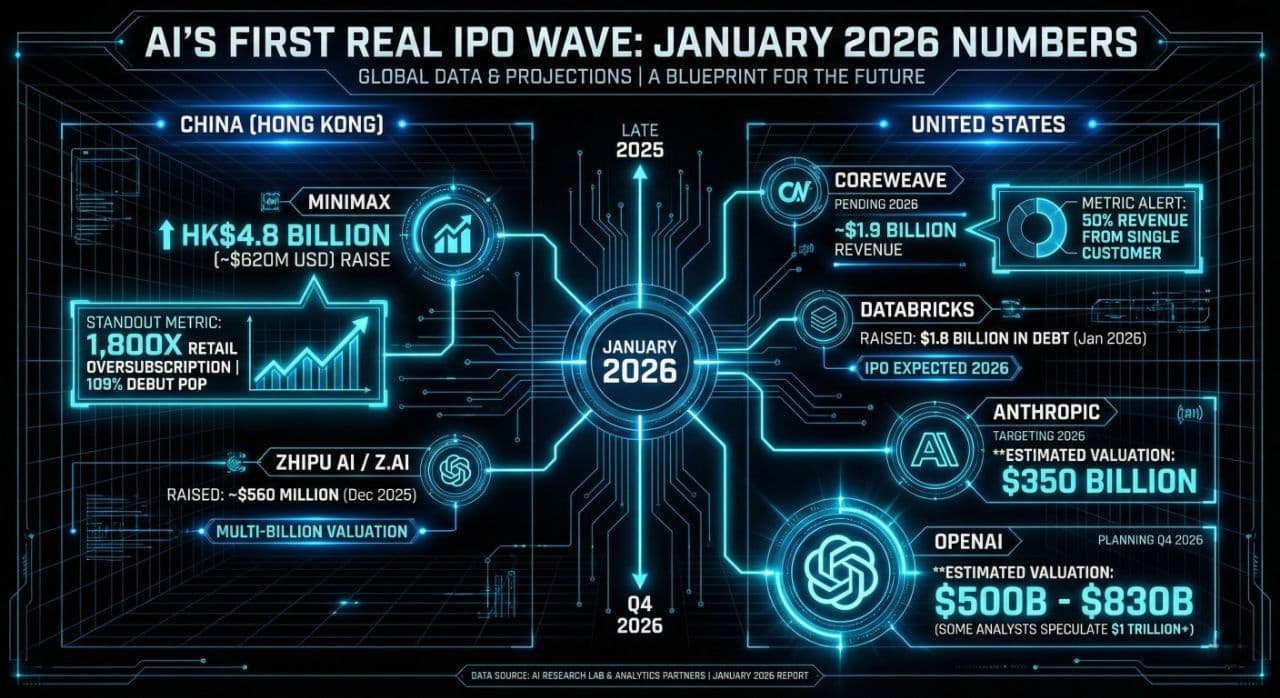

1. The AI IPO wave

MiniMax and Zhipu are useful because they put hard numbers on a story that has been mostly private until now. MiniMax priced its Hong Kong deal at the top of the range, raised about HK$4.8 billion, and retail demand was oversubscribed more than 1,000 times. Zhipu’s offer was smaller but still in the mid nine figures, and it now trades at a multibillion dollar valuation off the back of its GLM family of models. Together they show that public investors are willing to pay real money for pure model labs, not only for cloud vendors that resell GPUs.

In the United States, the pipeline is filling in. CoreWeave has filed for an IPO and disclosed around 1.9 billion dollars of revenue, more than half of it from a single large customer buying specialized AI cloud capacity. Databricks has taken on about 1.8 billion dollars of extra debt while it prepares its own offering, which is a classic pre‑IPO move for a company that wants flexibility on timing. Reporting around Anthropic points to a possible listing as early as 2026, and OpenAI is now described as working toward a fourth quarter IPO window to be valued at around $1 Trillion dollars.

"We have seen “waves” before, like scooter companies and Web3 exchanges. The difference this time is that these firms earn across many sectors at once. They sell compute, enterprise tooling, ad and productivity copilots, healthcare products, and scientific software on top of the same core models. That is closer to a general infrastructure layer than a single‑use product category."

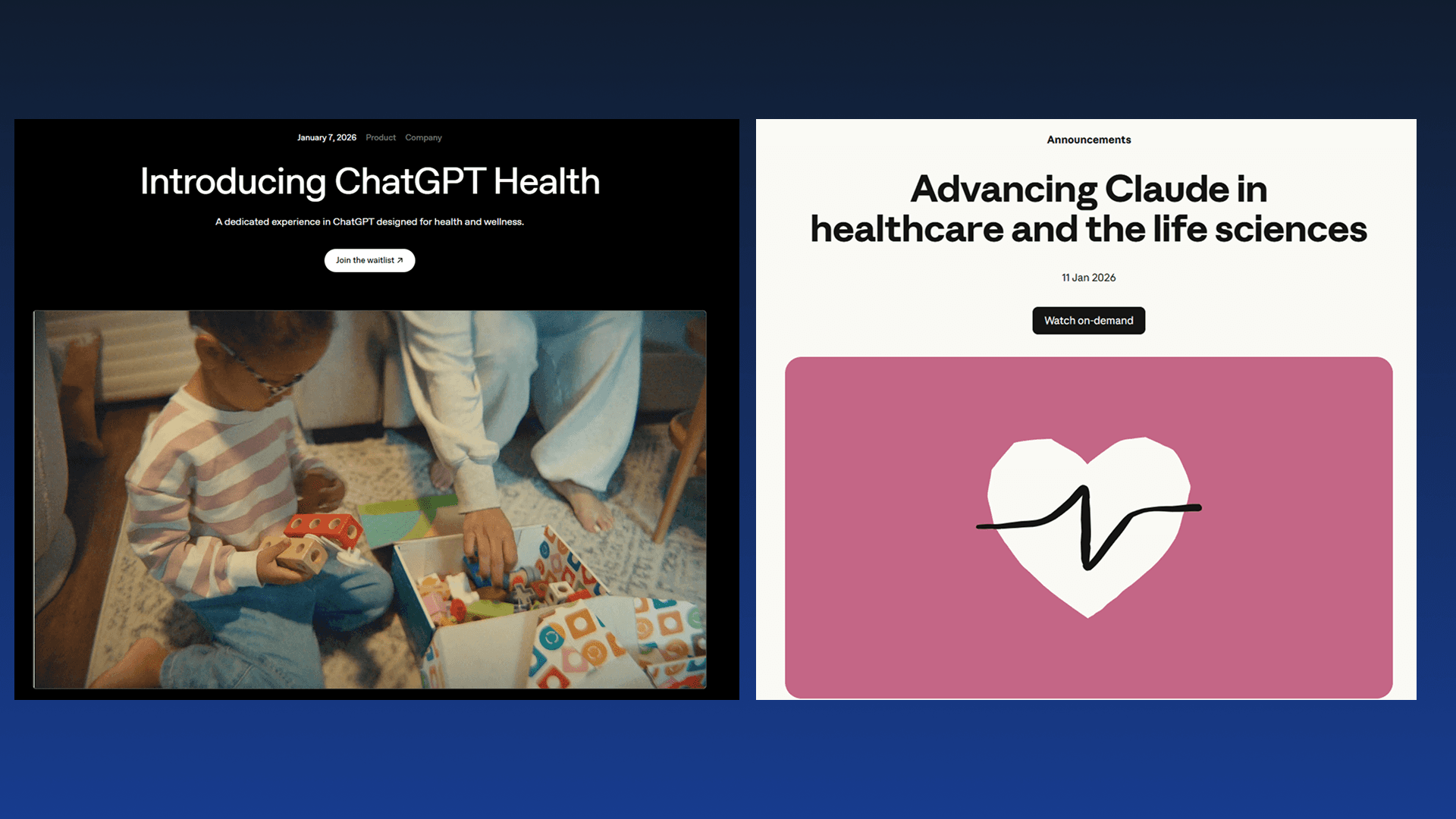

2. Healthcare stops being a side project

If investors are going to treat AI as infrastructure, healthcare is one of the most serious places that idea can be tested. In early January, OpenAI launched OpenAI for Healthcare and ChatGPT Health, which package GPT models for tasks like clinical documentation, chart summarization, and patient messaging inside regulated workflows. The positioning is not “ask a bot medical questions for fun.” It is “wire this into your EHR and let it draft the first pass so clinicians can edit.

Anthropic has rolled out Claude for Healthcare with its own set of medical tuned features and partnerships with providers and health tech companies. That puts at least two large labs in the mode of shipping explicit healthcare offerings instead of leaving the space to small vertical startups. The interesting part is not that AI can read medical text. It is that hospitals and vendors are starting to treat these models as standard components in their stack.

3. Vision and the shift to native multimodal

Until very recently, most large models were text first. Vision felt like an extension that might or might not work well. That pattern is changing. Moonshot AI’s Kimi K2.5 is an open weight model released in January that was built to handle text, images, and video from the start, with a large context window and strong results on vision benchmarks. It is not a text model that later learned to look at pictures. Perception is part of the core design.

On the platform side, Google’s latest Gemini updates push more of this into the default experience. Gemini 3 Flash and Pro improve scores on multimodal reasoning tasks and add what Google calls agentic vision. Instead of one static read of an image, the model can step through a scene, zoom in on regions, annotate what it sees, and even run code in the loop while it reasons. That is closer to how people actually inspect complex diagrams, dashboards, or screenshots.

I am keeping the forward looking calls for a dedicated 2026 predictions piece. For this one, the point is simple. A text only flagship model now feels incomplete and many of the models coming out this year will be multimodal models.

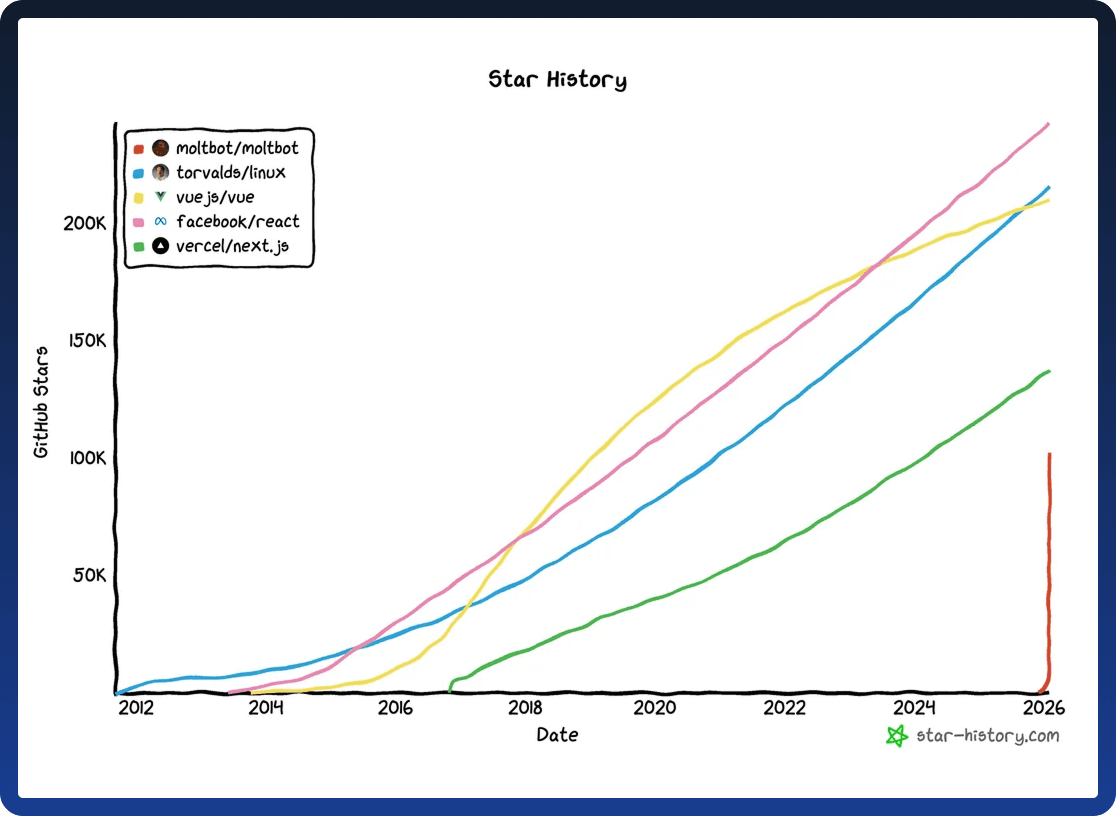

4. Agents at home and AI in the science stack

At the personal level, you can see the same shift in how people are starting to run agents. Clawdbot, which has been rebranded as Moltbot after pressure over the original name, is an open source assistant that you can host yourself and connect to WhatsApp, Telegram, Discord, Slack, and your browser. It can send messages, trigger scripts, control smart devices, and coordinate tasks from a small local or cloud machine, and in January it picked up a surge in users who are willing to run their own hardware for that. People do not just want another chat interface. They want the model to live inside their existing channels with memory and the ability to act.

On the scientific side, OpenAI’s Prism product hints at how tooling might change for researchers. Prism is a free LaTeX native workspace that integrates models into drafting and editing papers while keeping the source in LaTeX rather than a proprietary editor. It is closer to “Overleaf with an integrated model” than a generic word processor with a plugin. In parallel, partnerships like the Gates Foundation working with OpenAI on health and development projects show labs stepping into long running research programs instead of staying on the sidelines.

If 2023 to 2025 was the era of AI demos and prototypes, January 2026 is when you can point to IPO filings, hospital deployments, browser features, and research tools and see the same pattern. These models are starting to look less like one off apps and more like shared infrastructure that other people build on.