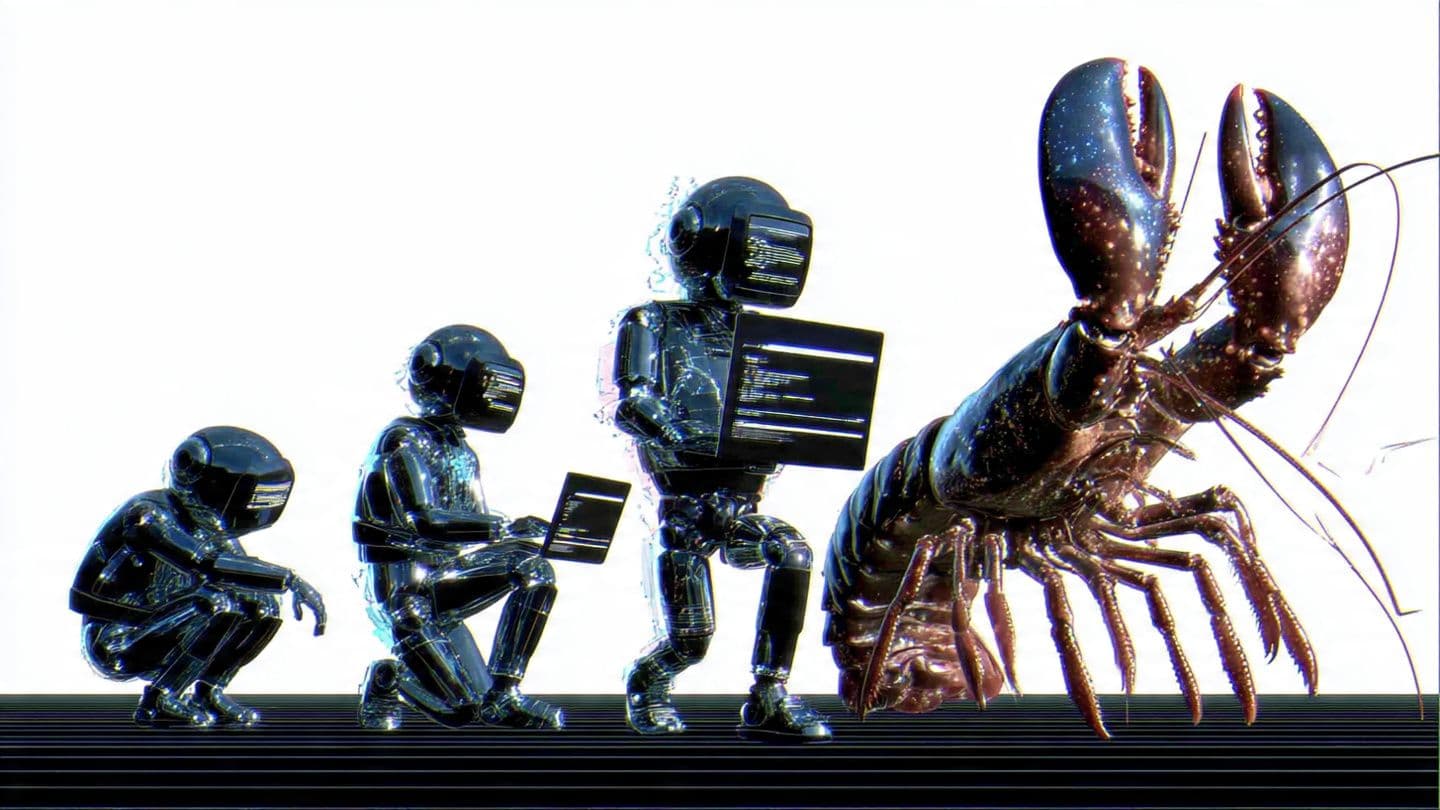

From Agents to Chatbots: How we got here

AIagentsdidnotappearoutofnowhere.Everycapabilityyouseetodaywasunlockedinsteps,eachonebuildingonthelast.Thisisthefullstoryofhowwegotfromachatwindowtoautonomousagents,andwhythepaceisonlypickingupfromhere.

In November 2022, OpenAI released ChatGPT and the world collectively lost its mind. Millions of people typed questions into a box and got coherent answers back. It felt like magic.

But it was still just a box.

You went to it. You typed. It responded. The model sat inside a window and that window was its entire world. Anthropic launched Claude, Microsoft shipped Copilot, Google put out Bard. All of them worked the same way. Text in, text out. Impressive, but passive.

The first real crack in that model came in June 2023, when OpenAI introduced tool calling.

Tool calling gave models the ability to reach outside the conversation. Instead of only generating text, a model could decide mid-task to call an external function, check a live API, pull real data, and use that result in its response. The model could now act on things, not just talk about them. But early tool calling was rough. Models would call the wrong tool, lose track mid-task, or need constant steering to finish what they started. To put a number on it: in 2023, the best models could solve just 4.4% of real-world software engineering tasks on the SWE-bench benchmark. On OSWorld, which tests how well a model completes computer-based tasks using tools, early 2024 scores were sitting around 5%. The capability existed. The reliability did not.

That started changing in 2024, and the software industry was where you could see it most clearly.

GitHub Copilot was already inside developers' code editors, but Cursor showed something further. The model was not just suggesting code, it was reaching into the file system and making changes. Watching that happen made AI taking actions feel real in a way it had not before. Meanwhile, the chatbots themselves were expanding: web search to get around knowledge cutoff dates, image generation, the Canvas feature for editing text inline, and Deep Research by late 2024. Each addition was the same idea expressed differently. AI doing things, not just saying things.

But every tool integration was still custom work. If you wanted a model to talk to Slack, you wrote code for that. Google Drive, same thing. There was no standard. Connecting AI to your tools was a one-off engineering job every single time.

That changed in November 2024 when Anthropic released the Model Context Protocol, MCP. MCP is a standardized way for AI models to connect to external services. Think of it as a universal adapter. You define the connection once and any MCP-compatible model can use it. That removed a huge amount of friction from building with AI agents, and it set the stage for what happened in 2025.

2025 is when everything started compounding.

In March 2025, the Chinese startup Monica.im launched Manus, an AI agent that would take a task and go handle it end to end. Browse, research, write, organize. Watching it work made agentic AI feel concrete for a broad audience, not just developers. Around the same time, N8N, the workflow automation tool, exploded as people realized they could connect AI to their existing services without writing custom code for every integration. N8N became a unicorn that year.

The benchmark numbers from 2025 tell you how fast the underlying models were improving. By May 2025, OpenAI's CUA model hit 42.9% on OSWorld, up from 5% just over a year earlier. By December 2025, Simular's Agent S crossed 72.6% on the same benchmark, passing the human baseline of 72.36% for the first time. On SWE-Verified, Claude Sonnet 4.5 reached 77.2% in October 2025, up from scores in the low 40s earlier that year. On τ-bench, which specifically tests whether models can complete multi-step tool-calling tasks across a full conversation without going off track, top models were hitting above 90% accuracy by end of 2025. The models had become reliable enough to be trusted with real work.

2025 still had friction though. You still had to connect the nodes manually in tools like N8N. Most integrations lived within specific platforms and their rules. The potential was clear. The setup was still on you.

Then OpenClaw went viral in January 2026.

OpenClaw was built by Austrian developer Peter Steinberger. It started under a different name in late 2025, rebranded twice, and landed as OpenClaw. Within the first week it crossed 100,000 GitHub stars. By March 2026 it was at 250,000, more than any project in GitHub's history.

What made it land so hard is that it brings together everything that had been building for three years.

OpenClaw runs on its own environment, a computer you give it, whether that is a Mac Mini at home or a cloud server. It is not living inside any platform's walled garden. When you give it a task, it figures out the connections itself. It sets up integrations, writes cron jobs for recurring work, reads and writes files. If it needs an API key, it asks you for it and handles the rest. You do not touch a config file. That is only possible because the underlying models are now reliable enough at tool calling to do that without falling apart mid-task. A year ago they were not.

OpenClaw is fully open source, and that matters for how fast things are moving. The project has over 1,200 contributors and ships updates at a rate no single lab could match. In February 2026, OpenAI hired Steinberger and backed OpenClaw through a foundation structure. It stays open source but now has the weight of a major lab behind it.

The enterprise side is moving in the same direction. Anthropic has Claude Cowork. Microsoft announced Copilot Cowork in March 2026, powered by Claude, built for agentic workflows inside organizations. What started as a developer tool is becoming standard infrastructure.

Three years ago, AI was a chat window. The road from there to here ran through tool calling, coding IDEs, MCP, and a lot of benchmark scores quietly climbing in the background. OpenClaw is where that road has arrived…..for now.

Here is the thing about this moment: what you are looking at today is the worst it will ever be. Everything you see right now, the tools, the speed, the capabilities, will look primitive twelve months from now. That is not hype, that is just the pattern this space has followed consistently for three years running.

If any of this feels overwhelming, that is a normal response. A lot is moving fast. But the right move is not to tune it out. The people who engaged early, even imperfectly, are the ones who are ahead today.